The Right to Information in the Private Sector

Visiting Scholar Lisa Austin argues for a rethinking of digital epistemic rights:

“What the right to information requires is that our digitally-mediated world be created in such a way (through law and policy as well as technological means) that it can be independently knowable and interrogated and that this ability is equitably distributed.”

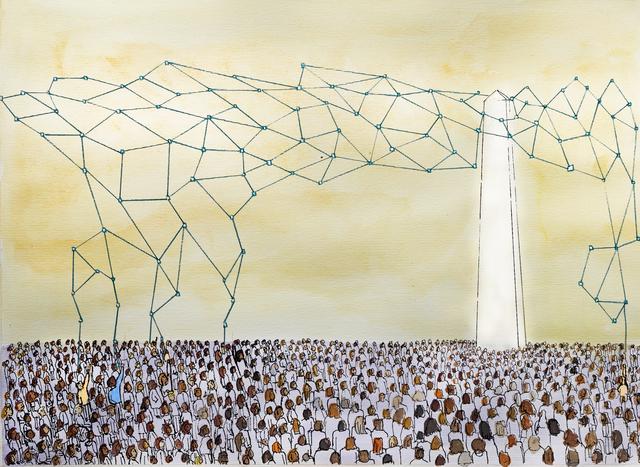

(Photo: Jamillah Knowles & We and AI / Better Images of AI / People and Ivory Tower AI 2 / CC-BY 4.0)

Freedom of information laws, like the US Freedom of Information Act, are a central feature of liberal democracies and provide individuals with rights of access to information held by public authorities. These laws are part of a growing international movement to understand the right to information as a core fundamental freedom within the pantheon of international human rights. The question we need to ask is whether the time has come to extend the reach of these laws and support a right to information in the private sector.

Under many private sector privacy laws, individuals have rights to access their own personally identifiable information that a company has about them. But individuals do not have rights to access data that is not about them. In a world where so many of our activities are digitally mediated in some way, this digital opacity leaves us all increasingly unable to understand key features of our shared social world.

There are some recent notable exceptions. One is the EU’s new Digital Services Act (DSA), which obligates “very large online platforms” and “very large online search engines” to share data with vetted researchers and regulators. The EU Digital Markets Act (DMA) requires that “gatekeepers” provide access to data in a variety of contexts for competitive purposes. In North America, the most important development is the proposed US Platform Accountability and Transparency Act(PATA), which would obligate “large internet” companies to provide access to their data for qualified researchers. These acts all impose various safeguards and modes of oversight regarding this access, including protections for user privacy.

The need for these initiatives is strong. Research access facilitates independent analysis of the impact of platforms and search engines and ensures that this access is not dependent upon corporate altruism. Whether the issue is litigating the harms of social media, misinformation regarding the Israel-Hamas conflict, or determining the value of news content shared by platforms, we cannot arrive at the best policy responses without evidence-based research. Initiatives like the DMA seek to ensure better competition in digital markets, with many potential benefits to consumer choice. However, instead of thinking about access to data as part of the implementation of policies aimed at goals like social media accountability or market competition, we should frame access to data as an independent right – the right to information – and seek to better understand its scope and normative foundations.

The unique features of our digital environment suggest that the right of access to data is too limited an expression of the right to information and that we might need a broader understanding of epistemic rights. For example, managing the privacy issues associated with such access can be complex and raises questions regarding whether because of this access will be equitably distributed or instead fall to researchers from well-resourced institutions. Sometimes the issue is not access but timely interventions. For example, some of the work done by the US 2020 Facebook & Instagram Research Election Study involved running experiments to investigate the impact of changes to the algorithms that determined what (consenting) research subjects saw. This was done with the cooperation of Facebook and Instagram but we could imagine a world in which independent tests could be required in some circumstances. At other times what is needed is that digital infrastructure be configured in ways that facilitate data donation by users. These examples all get at a set of deeper issues than access to data to study social risks and harms – what the right to information requires is that our digitally-mediated world be created in such a way (through law and policy as well as technological means) that it can be independently knowable and interrogated and that this ability is equitably distributed.

The DSA and PATA fit within some of the classic rationales offered for the right to information. The rationale of accountability is the clearest. The DSA conceives of researcher access as part of the overall purpose of detecting, identifying, and understanding systemic risks associated with platforms and search engines. It is situated, in other words, within an overall legislative framework aimed at making platforms and search engines accountable for the risks that they impose. Traditionally, the right to information also has a democratic rationale. Researcher access to internet company data fits within this by providing better evidence with which governments can make policy proposals and citizens can evaluate them.

However, the right to information in the private sector is supported by additional rationales tailored to its context. In addition to democratic participation, we need to think about the fair and just terms of social and economic participation. This takes us beyond the kind of researcher access provided in the DSA and PATA, or the competition policy underpinning the DMA. Instead, it supports broader labor-related claims such as Uber drivers seeking data about the platform, or creators seeking transparency from platforms and brands. In these examples, corporate data enclosure creates information asymmetries that can be exploitative. A social and economic participation rationale would also support projects like Block Party, a company that created anti-harassment tools for Twitter but ceased to operate when X blocked access to its data. In this example, corporate data enclosure prevents vulnerable users of a large platform from taking self-protective measures.

There is also a participation aspect to questions of research access to data. The DSA puts in place some conditions on research access, but it does not require either consultation or collaboration when the proposed research might affect communities in particular ways. The preamble indicates that companies should do this type of community consultation when engaged in their own risk assessment and risk mitigation activities but it is silent in the provisions regarding data access. There are other examples of community consultation, collaboration, and decision-making in the context of data collection and access that we might look to. For example, a recognition of Indigenous Data Sovereignty requires Indigenous authority over research on data about Indigenous peoples. In light of this, many researchers in Canada follow the OCAPⓇ principles that recognize Indigenous ownership and control over data about Indigenous peoples. The Canadian province of British Columbia has recently passed legislation (the Anti-Racism Data Act) that requires consultation and collaboration with affected communities when public agencies collect data “for the purposes of identifying and eliminating systemic racism and advancing racial equity”. Since one of the concerns about platforms and search engines is that they perpetuate biases and discrimination, we cannot ask the question of access without also asking who gets a seat at the table when deciding about access.

The DSA, DMA, and PATA are a good start to recognizing a right to information in the private sector. However, this is just the beginning of a debate about a much more robust set of epistemic entitlements. And just like with public sector freedom of information laws, access rights will always be subject to justified limits. What we can no longer do is ignore the need to have this debate.